|

csv ( "s3a://test-hyunho/data/OnlineRetail.csv", header = True )ĭata. > spark = ( 'test-spark' ).master ( 'local' ).getOrCreate ( ) > data = (CSV_PATH, header =True ) > data.show ( 1, False, vertical =True ) aws s3와 연결할 수 있게 설정 pyspark env $ conda create -n pyspark python = 3.8 -y

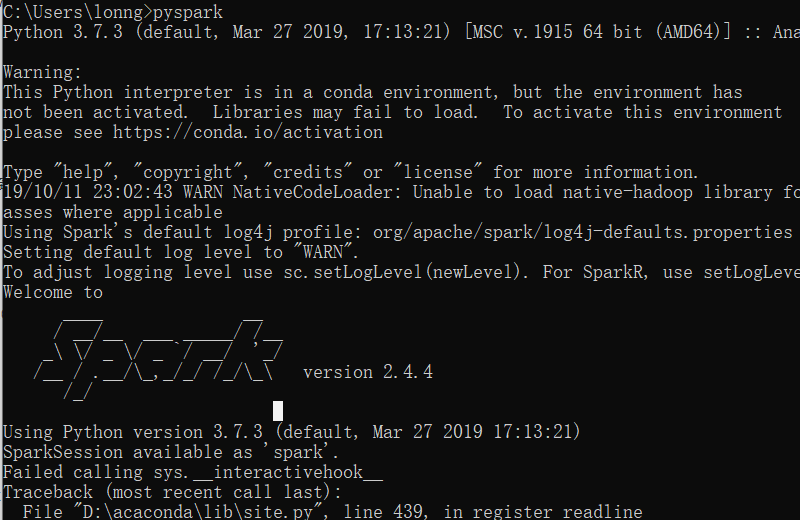

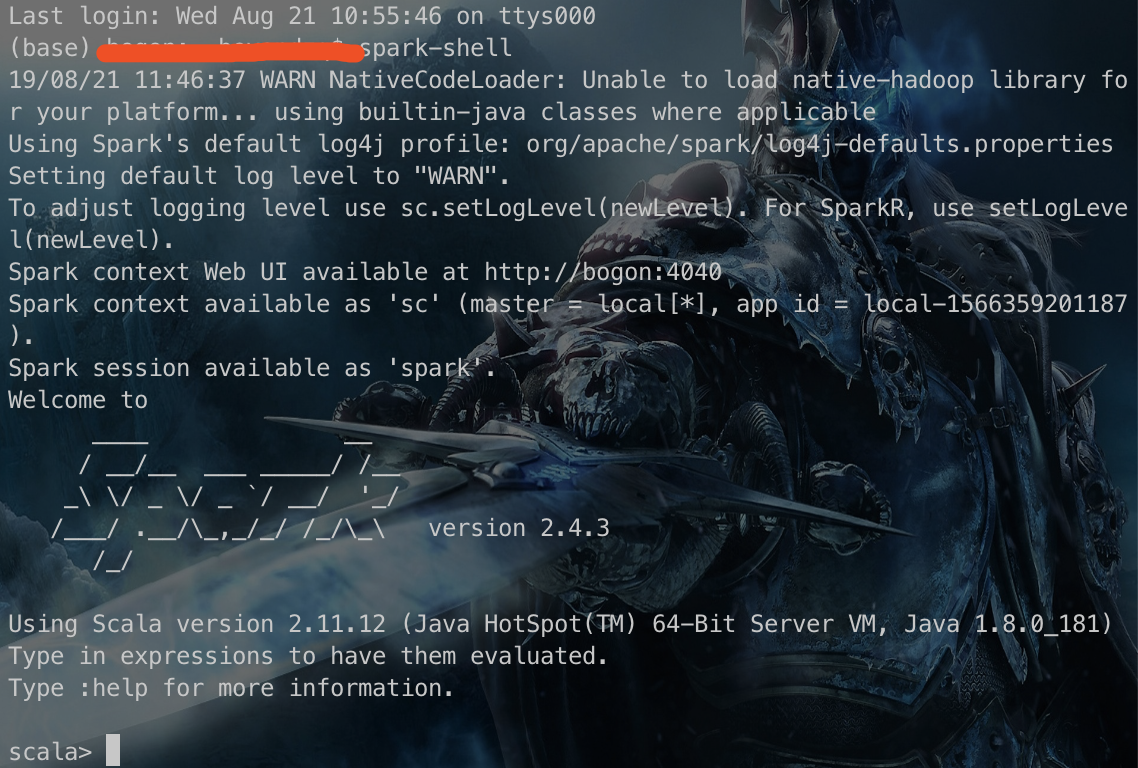

Using Scala version 2.12.15, OpenJDK 64-Bit Server VM, 11.0.11 Once completed do brew edit apache-spark and edit the Pachecos-spark. Install latest Spark by brew install apache-spark. But is not available any more in the Brew listing but still you can make it. > pip install pyspark3.0.2 or > conda install -c conda-forge pyspark. 아래처럼 나오면 setting 완료 $ spark-submit -versionĢ2/11/27 16:44:25 WARN Utils: Your hostname, hyunhoui-MacBookPro.local resolves to a loopback address: 127.0.0.1 using 172.20.10.6 instead (on interface en0 ) 22/11/27 16:44:25 WARN Utils: Set SPARK_LOCAL_IP if you need to bind to another address I need to install Apache Spark 2.4.0 version specifically on my MacBook. Actually, if you are using Mac or Linux, you can still use Homebrew to install JDK v8, here are the steps. Bottom line: the Apple M1 is an impressive feat of engineering that will shake up the industry. This makes it a much harder sell than the MacBook Air. PATH =CURRENT_DIR/spark-3.3.1-bin-hadoop3/bin: $PATH 2 Photo by Sumudu Mohottige on Unsplash Apple Silicon is the processor architecture used inside Apple’s computer chips. Like the Air, the MacBook Pro lacks connectivity, expansion, and RAM options desired by enthusiasts and professionals, yet it only delivers marginally better performance compared to the Air. HADOOP_HOME = $CURRENT_DIR/spark-3.3.1-bin-hadoop3 An example of this is using conda to install the environment and using another installer for the metal plugin. There is no published method for installing Tensorflow, the leading ML API, on a Macbook Pro M1 that actually works without breaking something else. For the next steps, you need to download the file that you can get in this link. This given that it is such an important topic affecting the adoption of Macbook Pro M1s. First of all, we need to call the Python 3.9.1 image from the Docker Hub: FROM python:3.9.1.

# ~/.zshrc에 아래 정보 추가 SPARK_HOME = $CURRENT_DIR/spark-3.3.1-bin-hadoop3 In order to run Spark and Pyspark in a Docker container we will need to develop a Dockerfile to run a customized Image. With Xcode 11 and later it is now possible to build Universal 2 binaries which work on Apple Silicon.

OpenJDK 64-Bit Server VM AdoptOpenJDK-11.0.11+9 (build 11.0.11+9, mixed mode ) spark install Per python website Installer news 3.9.1 is the first version of Python to support macOS 11 Big Sur. OpenJDK Runtime Environment AdoptOpenJDK-11.0.11+9 (build 11.0.11+9 ) To install the latest version of JDK, open your terminal and execute the following command: brew install openjdk To check if the installation was successful, run the following command: java -version 2. Miniconda3-p圓8_4.12.0-MacOSX-arm64.sh java install Install Java Development Kit (JDK) PySpark requires Java 8 or later to run.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed